HBM vs HBF — Why the Memory Hierarchy Is Being Stretched

If you strip away the marketing language, the AI hardware race right now is about one thing: moving data is expensive.

Not financially. Electrically.

Every time information leaves memory, crosses a bus, hits a processor, and comes back, energy gets burned. At scale, that energy becomes heat. Heat becomes infrastructure. Infrastructure becomes cost.

That’s why HBM exists.

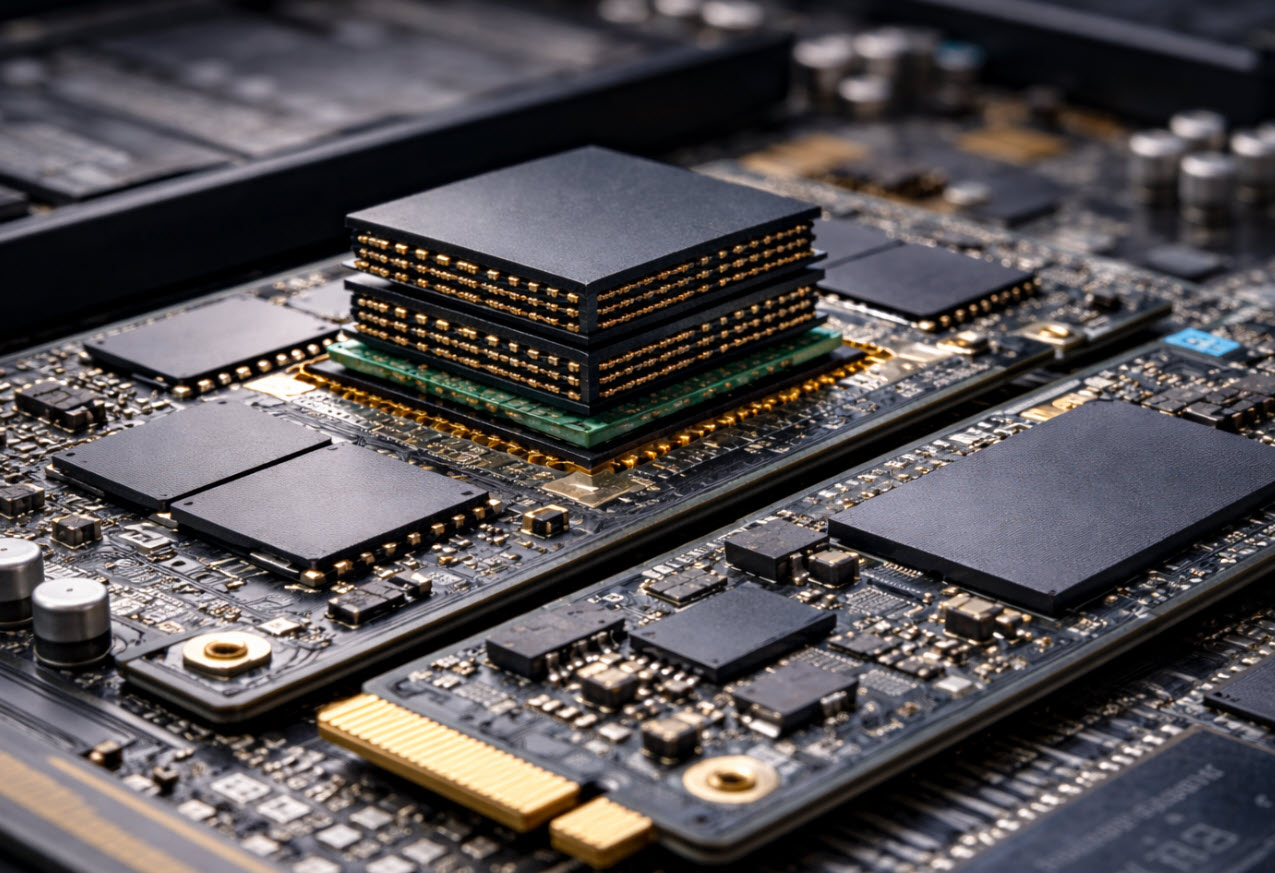

High Bandwidth Memory is stacked DRAM sitting inches — sometimes millimeters — from the GPU die. Wide buses. Short traces. Massive parallel lanes. The whole design is built around minimizing travel distance.

It’s brutally fast. Terabytes per second fast. And correspondingly expensive.

HBM is where AI training lives. When you’re updating billions of weights repeatedly, latency and bandwidth aren’t optional.

If you want a deeper historical backdrop on how flash evolved into today’s density race, see our piece on flash memory — where did it start?

But training isn’t the only workload anymore.

Inference — actually running the model — behaves differently. It streams, references, and reads far more than it rewrites. Still heavy. Just not the same access pattern.

That shift is what opened the door for HBF.

High Bandwidth Flash isn’t a retail product yet. It’s a structural idea: take NAND flash, move it closer to compute, widen the interface, expose more parallelism, and create something that sits between DRAM and SSD.

On paper, it looks elegant.

Flash is cheaper per gigabyte than DRAM. If you can give it more bandwidth and reduce the distance to the processor, you’ve potentially unlocked larger working sets without HBM pricing.

Here’s the landscape as it stands:

| Technology | Typical Latency | Bandwidth | Cost per GB | Primary Role |

|---|---|---|---|---|

| HBM (Stacked DRAM) | Nanoseconds | Terabytes/sec | Very High | Active AI training memory |

| DDR DRAM | ~100ns | High | High | System memory |

| HBF (Proposed) | Microseconds | Medium-High | Lower | Inference expansion tier |

| NVMe SSD | Microseconds–Milliseconds | Moderate | Low | Bulk storage |

That middle row is where the argument lives.

Flash memory does not behave like DRAM. It never has. NAND stores charge in floating gates or charge traps. Writing requires erase cycles. Erase cycles happen in blocks. You don’t overwrite a byte — you reset a region.

That’s why latency jumps from nanoseconds to microseconds. Packaging doesn’t change electron tunneling time.

If you want a refresher on how erase behavior impacts performance, read why NAND flash erase speed still matters.

So if HBF is going to work, it works because inference is read-dominant. Streaming patterns are friendlier to flash. Predictability helps. Random writes hurt.

Now zoom out further.

CXL memory expansion doesn’t change memory type at all. It extends DRAM outward. Compute Express Link allows large external pools of DRAM to behave coherently with the CPU. Latency rises compared to local DIMMs, but capacity scales without adding sockets.

Near-memory compute moves in the opposite direction. Instead of dragging data to a processor, it embeds logic closer to memory. Logic layers inside HBM stacks. Small compute engines inside arrays. The goal is fewer round trips.

Computational storage pushes filtering into the SSD itself. If only a fraction of stored data is useful, process it inside the drive before sending it across PCIe. Less movement. Less power.

All of these ideas orbit the same constraint: bandwidth costs energy.

HBM attacks latency directly. CXL stretches DRAM capacity. Near-memory compute reduces movement. Computational storage trims waste. HBF tries to bend economics in the middle.

None of them break physics. They rearrange trade-offs.

At hyperscale, trade-offs decide architecture. Speed is impressive. Efficiency keeps the lights on.

Tags: AI memory architecture, CXL memory expansion, HBM memory stack, High Bandwidth Flash, NAND flash physics